You Have Just Six Emotions

At least it would be easier for the machines if we didEfforts to enable machines to read our emotions are hitting a roadblock and, oddly enough, Charles Darwin (1809-1882), founder of popular evolution theory, plays a role in getting it wrong:

The world is being flooded with technology designed to monitor our emotions. Amazon’s Alexa is one of many virtual assistants that detect tone and timbre of voice in order to better understand commands. CCTV cameras can track faces through public space, and supposedly detect criminals before they commit crimes. Autonomous cars will one day be able to spot when drivers get road rage, and take control of the wheel.

But there’s a problem. While the technology is cutting-edge, it’s using an outdated scientific concept stating that all humans, everywhere, experience six basic emotions, and that we each express those emotions in the same way. By building a world filled with gadgets and surveillance systems that take this model as gospel, this obsolete view of emotion could end up becoming a self-fulfilling prophecy, as a vast range of human expressions around the world is forced into a narrow set of definable, machine-readable boxes. Dr Rich Firth-Godbehere, “Silicon Valley thinks everyone feels the same six emotions” at Quartz

How did we decide that there were only six emotions? The theatre would be impoverished and the fiction rack destitute if…

Psychologist Paul Ekman, billed at his website as “The world’s deception detection expert, co-discoverer of micro expressions and the inspiration behind the hit series, Lie to Me,” originated the idea.” His interest in psychology began with a tragedy: His mother committed suicide when he was 14.

The conventional view at the time, represented by, for example, Margaret Mead (1901–1978), was that emotions are multifarious and fluid. But Ekman was drawn to Darwin’s idea that we have evolved universal emotions from an animal past. In the 1960s, he did research in New Guinea among the Fore people who had allegedly had little contact with Europeans and, we are told, “this work strongly suggested that emotional facial expressions are biologically determined, as Darwin had predicted.” Emotions even machine could read.

In 1955, Firth-Godbehere tells us, Mead regarded Darwin’s claims, advanced in the 1872 essay to which she wrote a foreword, “The Expression of the Emotions in Man and Animals,” as “a historical curiosity.” But in 1998, Ekman was the one to write the foreword and he defended them, leading a consensus.

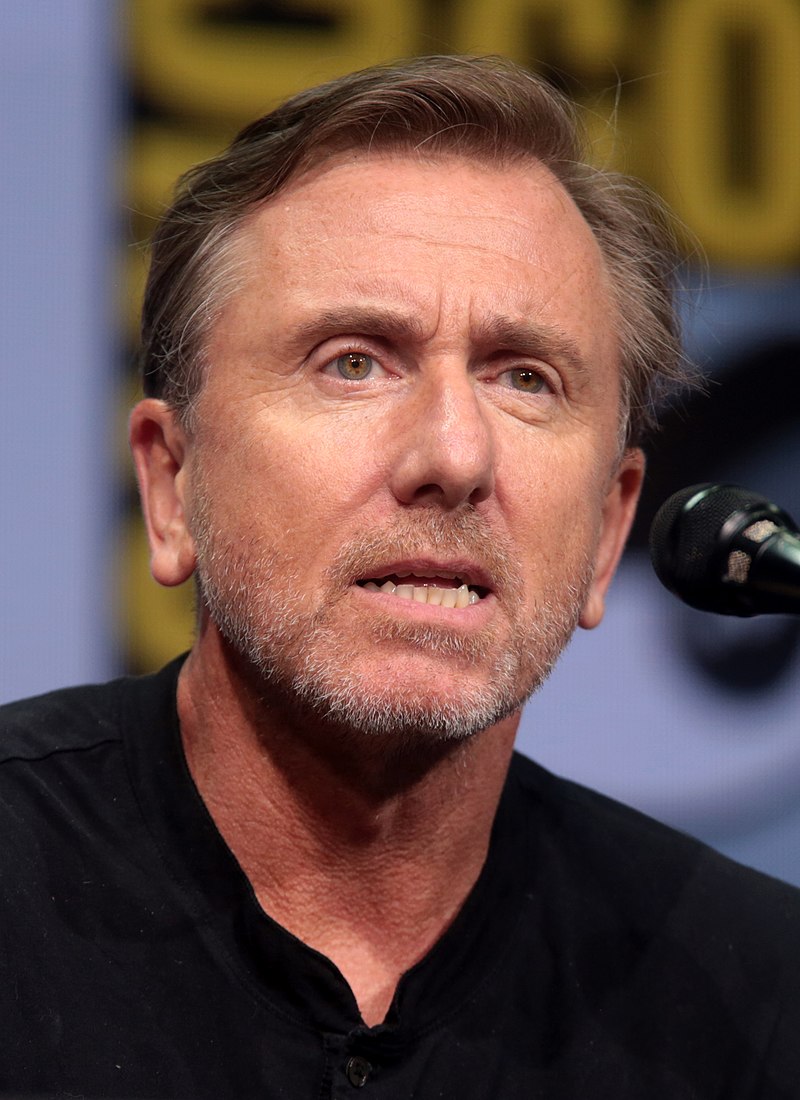

“Six emotions” thinking found its way into popular culture, in part via Lie to Me (2009–2011), where the curiously named “Cal Lightman”, played by Tim Roth, is “the world’s leading deception expert who studies facial expressions and involuntary body language to expose the truth behind the lies.”

Ekman/Lightman is portrayed as breaking the code for understanding emotions. We are told, for example, with much show of authority,

Dr. Cal Lightman is the center focal point of the entire show. As a scientist, he has a high level of expertise at interpreting and detecting tiny “micro” expressions. It is these minute involuntary expressions on the face that last just milliseconds that give away usually hidden aspects of whether the person is telling the truth or not. It is Lightman’s abilities through his useful skills that can accurately detect when the person is telling the truth or not… It is Ekman that reveals, through Tim Roth’s character Cal Lightman, that humans use the same facial muscles all around the world in every language to express surprise, anger, despair and happiness. Originally, Darwin hypothesized against the belief of scientists for 100 years, that facial expressions are not determined by culture. “A Profile of Dr. Cal Lightman on Lie to me” at Forensic Psychology Online,

To underline the fictional Lightman’s identity with Ekman, in the backstory, his mother committed suicide too:

In the show, Cal Lightman indicates that when he was a child his mother had been shuttled away to a mental hospital to be treated for an unknown disorder. She was finally able to convince the hospital doctors that she was well enough to enjoy a weekend pass away from the facility to spend with her husband and children. It was then that she committed suicide. Lightman was able to review the doctors’ sessions with his mother on video and studied her facial expressions. He could see that she appeared to be happy on camera, when obviously she was anything but. “A Profile of Dr. Cal Lightman on Lie to me” at Forensic Psychology Online,

If only the doctors could have known what Darwin knew…

Never mind, today the machines know:

Gone are fallible human brains, replaced by emotion-detecting AI that watches humans in airports, through CCTV, and in police interview rooms. This isn’t science fiction—the sunglasses of some Chinese police officers already have face recognition technology built into them. Dr Rich Firth-Godbehere, “Silicon Valley thinks everyone feels the same six emotions” at Quartz

But, says Firth-Godbehere, a curious thing has happened to the mind-reading machines: “every one of these systems seems to run into a problem of some kind when progressing beyond small-scale trials. Once you try to apply the basic model of emotions at scale, instead of in a lab, it starts to look less infallible.”

What could have gone wrong?

Well, ominously, people who classify emotions for a living seem unsure as to whether there are four, five, six, seven, eight, nine, or ten. Each number is surrounded by a cottage industry of research, books, and materials. Other accepted systems surely exist. Firth-Godbehere summarizes, “despite everything, there still isn’t a definition of “emotions” that everyone agrees on. Almost every paper for the last 50 years has included its own version.”

An embarrassing problem has developed as well: The Fore people had actually been in contact with Europeans: “There is only a low likelihood that any members of the Fore remained entirely isolated from the rest of the world by the late 1960s,” he says. Also, Ekman’s claims have not withstood disconfirmation. His facial expression photographs were exaggerated and unrealistic: “More recently, a group led by psychologist Lisa Feldman Barrett has found that if you provide a wide range of facial expressions in photos, and allow participants to group them into categories of their choosing, those categories don’t match from one culture to the next.”

But the machines still believe. Firth-Godbehere asks, “Do we really want these devices and systems to keep us calm, judge our road rage, or spot our criminal tendencies? How long before someone is wrongly accused and convicted for something that a pair of sunglasses reports they were going to do?” He worries that we will simply learn to show fake emotions, to avoid problems with detection systems foisted on us.

We could do that. We could also keep in mind that we vote on and pay for security systems. And that we are in no way obliged to obey the dictates of fashions in psychology, however popular they may be, for however long, in the academy.

See also: Senior Google scientist quits over Google’s censorship in China He believes it “contravenes widely accepted principles of international law and human rights” Some believe that any censorship system that a human being can develop can somehow be got around by another human being. China may provide a way of testing that.