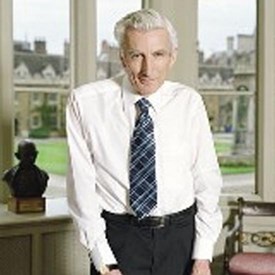

Noted Astronomer Envisions Cyborgs on Mars

Sir Martin Rees thinks of this “post-human evolution” as going beyond Darwin to “secular intelligent design.”

When astronomer Sir Martin Rees, Templeton Prize winner (2011), thinks of the future, he thinks of cyborgs living on Mars, a topic he develops in his new book, On the Future. As he told NBC’s Denise Chow in an interview in New York,

It’s a fundamentally new development in that it’s a kind of evolution [that] is not the Darwinian natural selection, which over three-and-a-half billion years have led simple life to us human beings. It will be a version of secular intelligent design [where] we design entities which may have greater capacity than humans. This could happen in a few centuries rather than a few thousand centuries, which Darwinian selection requires in order to produce a new species. The key question is to what extent it will be flesh-and-blood, organic intelligence and to what extent it will be electronic.

He thinks of this predicted development as “secular intelligent design.”

He thinks of this predicted development as “secular intelligent design.”

Denise Chow: If that happens, would we still be considered human?

Martin Rees: This, of course, raises all kinds of philosophical questions. People imagine that we can download human brains one day into electronic machines. The question is: Is that really still you? If you were told your brain had been downloaded, would you be happy to be destroyed? What would happen if, for instance, many copies were made of you? Which would be your personal identity?

Also, the question of consciousness. We know that what’s special about us is not only that we can do all kinds of things that demand intelligence, but we are self aware. We have feelings and emotions. It’s a big uncertainty whether an electronic intelligence, which manifests the same capabilities as us, will necessarily have self-awareness. It could be that self-awareness emerges in any entity that’s especially complex and is plugged into the external world. It could be [that] it’s something which is peculiar to hardware made of flesh and blood, like we are, and would not be replicated by electronic machines. Denise Chow, “Will humanity survive this century? Sir Martin Rees predicts ‘a bumpy ride’ ahead” at NBC News

AI apocalypse is certainly in the air. Elon Musk, Henry Kissinger, and the late Stephen Hawking have all predicted an AI doomsday. Industry professionals’ doubt and disparagement don’t seem to register with the media in the same way.

Rees, who is former president of the Royal Society, goes further, however. He also predicts in his book that “a physics experiment could swallow up the entire universe.” When he received the Templeton Prize in 2011, he was noted for speculating that we could be living in a giant computer simulation. In 2017, he suggested that our universe may be lost in an “unbounded cosmic archipelago,” a multiverse where “we could all have avatars.” As for universes, “Ours would belong to the unusual subset where there was a “lucky draw” of cosmic numbers conducive to the emergence of complexity and consciousness. Its seemingly designed or fine-tuned features wouldn’t be surprising.”

The only type of universe we do not seem to be living in, on that view, is the simpler one we observe, about which we have at least some information.

In his 2003 book, Our Final Hour, Sir Martin gave humanity about a 50-50 chance of surviving the 21st century, due to “terror, error, and environmental disaster.” Asked by Chow how that was coming, he replied, “Well, we survived 18 years so far, but I do think we will have a bumpy ride through the century. I think it’s unlikely we’ll wipe ourselves out, but I do feel that there are all kinds of threats which we are in denial about and aren’t doing enough about.”

In his 2003 book, Our Final Hour, Sir Martin gave humanity about a 50-50 chance of surviving the 21st century, due to “terror, error, and environmental disaster.” Asked by Chow how that was coming, he replied, “Well, we survived 18 years so far, but I do think we will have a bumpy ride through the century. I think it’s unlikely we’ll wipe ourselves out, but I do feel that there are all kinds of threats which we are in denial about and aren’t doing enough about.”

So, at least in this universe, assuming that the aliens operating the computer sim are not deceiving us and our AI masters are prepared to show mercy, the threats from terror, error, and environmental disaster have been downgraded significantly from 50-50 annihilation to a “bumpy ride.”

Fellow Earthlings, the information outlined above certainly helps us estimate how seriously we should take the threats.

See also: AI machines taking over the world? It’s a cool apocalypse but does that make it more likely?

Software pioneer says general superhuman artificial intelligence is very unlikely The concept, he argues, shows a lack of understanding of the nature of intelligence

and

Machines just don’t do meaning And that, says a computer science prof, is a key reason they won’t compete with humans