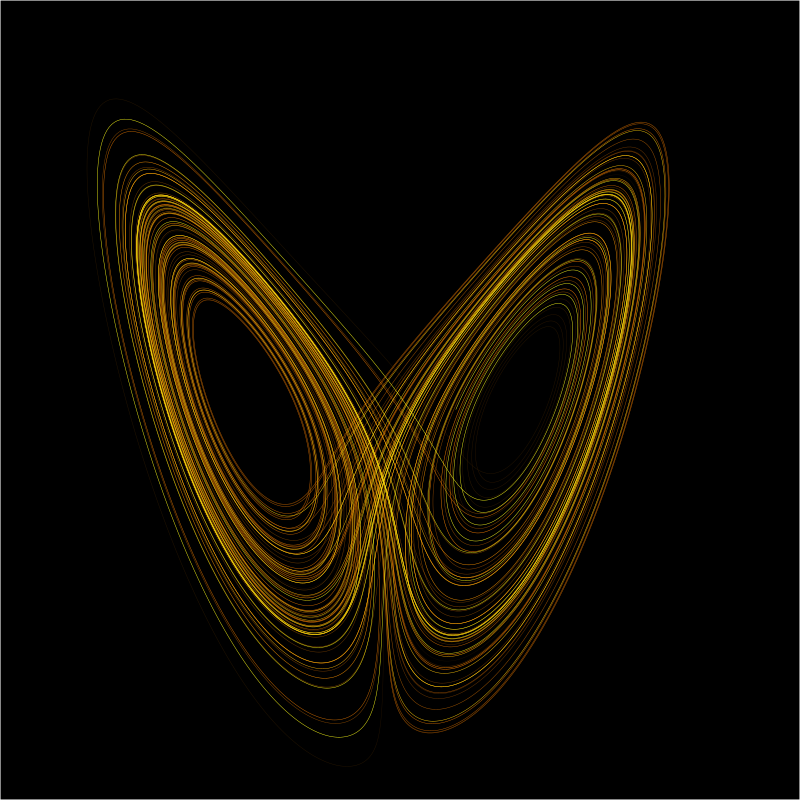

Does the Butterfly Effect Sharply Limit AI’s Power?

Our world and our lives are more complex, and even chaotic, than math allowsIn 1963, MIT Professor and Research Scientist, Edward Lorenz (1917–2008) published a classic paper with a mouthful of a title, “Deterministic Nonperiodic Flow.” What he described became much better known as “The Butterfly Effect,” from his question, posed as the title of a lecture in 1972, “Does the flap of a butterfly’s wings in Brazil set off a tornado in Texas?”

Lorenz had been experimenting with early computer models of weather (using just twelve variables). Rerunning one trial he unknowingly entered values that differed from the original by just 10-4. The results rapidly skewed from his prior run of the same experiment.

Lorenz had discovered that certain systems—notably those in which we live and move, such as weather, economies, and traffic—are inherently unpredictable. No amount of computer power can guarantee an accurate result because tiny variations in measurement may dramatically skew the results. Science historian James Gleick tells the story in his Pulitzer Prize-nominated book, Chaos: Making a New Science (2008).

Lorenz had discovered that certain systems—notably those in which we live and move, such as weather, economies, and traffic—are inherently unpredictable. No amount of computer power can guarantee an accurate result because tiny variations in measurement may dramatically skew the results. Science historian James Gleick tells the story in his Pulitzer Prize-nominated book, Chaos: Making a New Science (2008).

AI is nothing if not math. Even our most advanced AI relies on digitized measures of the world—from the pixels of images to the values of the Lidar sensors on our cars. Which lands AI right in the middle of Lorenz’s Butterfly Effect. One outcome is that we cannot really explain—or validate—the results of AI systems. Harvard philosopher and Fellow David Weinberger says of a Deep Learning medical analytical tool,

“The number and complexity of contextual variables mean that Deep Patient simply cannot explain its diagnoses as a conceptual model that its human keepers can understand.” David Weinberger, “Machine Learning Widens the Gap Between Knowledge and Understanding” at Medium OneZero

Weinberger views the cloud of uncertainty as an advance over the cumbersome models that humans like Lorenz, who wanted to predict the weather, seek. But Lorenz’s study of non-linear systems suggests a much more cautious approach: Should we believe the AI results? Doctors could believe Deep Patient because they agreed with the diagnosis; that says nothing about the independent value of results whose “reasoning” remains hidden.

Not only are complex AI systems not a magical advance over dull-witted humans, they may be hitting their limits. Joe McKendrick, at Forbes online, reviews Weinberger’s book Everyday Chaos: Technology, Complexity, and How We’re Thriving in a New World of Possibility (2019) and concludes

When it comes to employing the latest analytics in enterprises and beyond, even the best technology—predictive algorithms, artificial intelligence—can’t explain, and even reveal, the complexity and interactions that shape events and trends. Joe McKendrick, “Four Rules To Guide Expectations Of Artificial Intelligence” at Forbes

Our world and our lives are more complex, and even chaotic, than math allows. In short, AI systems, no matter how advanced and powerful, will always miss their predictive mark. We should trust and use them only when we can validate and verify the results or, alternatively, ensure enough safeguards to protect us if they go rogue.

Also by Brendan Dixon: Artificial intelligence is really superficial intelligence

and

The “Superintelligent AI” Myth: The problem that even the skeptical Deep Learning researcher left out

Note: The image above is a plot of the Lorenz Attractor by Wikimol and Dschwen (CC BY-SA 3.0) showing the “butterfly.”