Possible Minds?: But What If the Minds Are Impossible?

Suppose we actually can’t create thinking AI? How would THAT change the world?

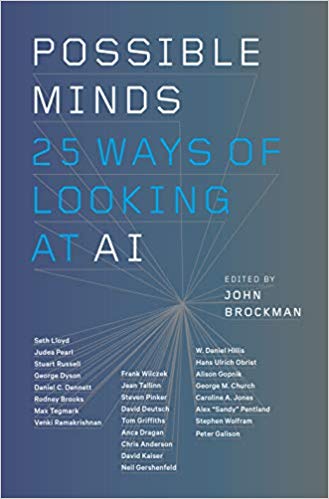

In Possible Minds: Twenty-Five Ways of Looking at AI (2019), prominent science literary agent John Brockman has put together a more eclectic mix of experts than Martin Ford did in Architects of Intelligence (2018). Brockman’s choices include multiverse proponent Max Tegmark, evolutionary psychology icon Steven Pinker, and materialist philosopher Daniel Dennett. That the two books came out only months apart, with only three overlapping experts, testifies to public interest in the topic.

Possible Minds’ contributors bounced their ideas off those of Norbert Wiener (1894-1964), who predicted greatly advanced technology in The Human Use of Human Beings: Cybernetics and Society (1950). Wiener saw the main problem as the grief we could inflict on each other using AI. His concerns are being realized today in China’s total surveillance society.

But the current popular focus seems to be on glitzier, unrealized calamities, characterized by one reviewer as “Will humans matter in an age of sentient machines?”:

Several of the writers agree that we’re still two to four decades away from artificial intelligence matching the power of the human brain, despite victories by computers in the realms of chess, Go and even “Jeopardy!” Give a computer a defined task and a bunch of input, and it can win. But no machine can yet match the human brain’s ability to process input via the senses, make complex and creative decisions or conceive a broader purpose — and even IBM’s Watson doesn’t touch the human mind’s efficiency, burning 85,000 watts of power to edge out human brains that run on 20 watts.

Rich Lord, “‘Possible Minds’: Will humans matter in an age of sentient machines?” at Pittsburgh Post-Gazette

What humans choose to do matters a great deal to the persecutors of the Uighurs in China.

Computer engineer Eric Holloway has looked at another matter Lord raises, the processing power of brains vs. computers, here at Mind Matters News:

The gameplay processing requirements between human and machine can also be compared. AlphaGo Zero requires four TPUs to make the decisions, which amounts to about 10 trillion operations per second, compared to the human’s estimated 50 operations per second. So there is a large disparity between human and AI efficiency in this case as well.

The scale of the difference Holloway notes raises the question of whether the two processes are even doing the same thing. But books that examine such a question wouldn’t attract the same popular audience. The ones that do attract that audience, however serious their intention, tend to become a “thing.”

At Inc., Possible Minds is rated as business book #2 for 2019 (already!) and expertly buzzed: “Should we trust something potentially smarter than us? What is humanity’s role in a world ruled by algorithms?”

The question of whether we can create something smarter than us is an open one even for a gifted software engineer. But we should not expect it to emerge from or survive in the froth around our “exhilarating, terrifying future.”

Such questions are the stuff of a long, cold AI winter. Here’s another: What if human-like AI turns out to be impossible because reasoning is not calculation and calculators do not reason? What if we actually can’t create thinking AI? Can we have a discussion about that?

See also: Artificial Intelligence: Prophets in Conflict A total of 48 AI experts tell us what it all means but their predictions strongly disagree

and

What Are the “Architects of Intelligence” actually designing?

Even their polite disagreements are fairly substantial