Machine Learning Dates Back To at Least 300 BC

The key to machine learning is not machines but mathematicsMany people think that artificial intelligence and machine learning are recent phenomena. However, these techniques and ideas actually go back deep into human history. Machine learning has always been an important tool for data mining for humanity, it was given different names in different eras.

The key to machine learning is not machines but mathematics. There is nothing special about silicon and electricity. In fact, the first computers were mechanical, not electrical.

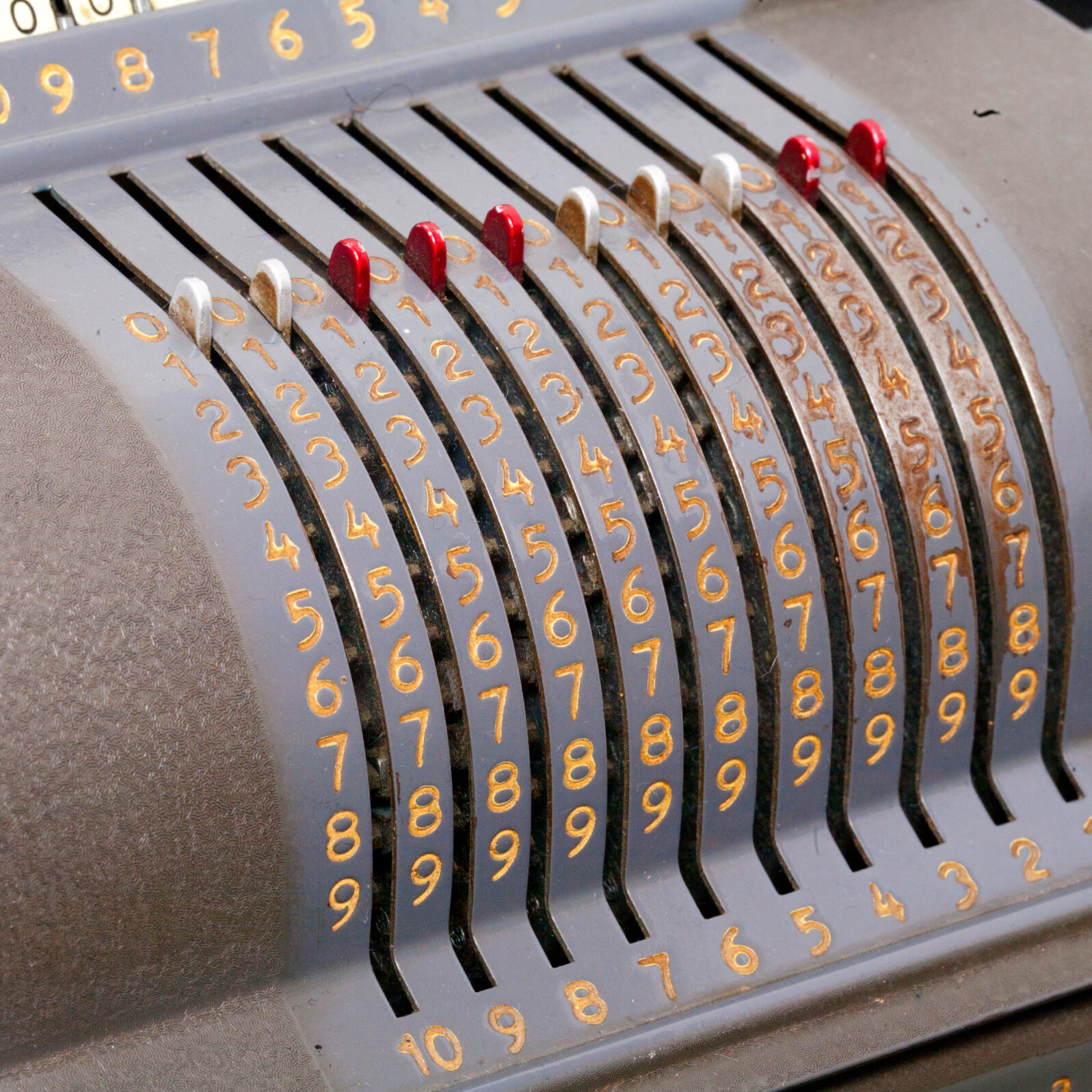

The devices designed by Charles Babbage (1791-1871) are best-known. Babbage’s Difference Engine was built in 2002, 153 years after it was designed. and his Analytical Engine was designed (though never completed) in 1837. But well before Babbage, others had invented mechanical computational devices. Wilhelm Schickard built a mechanical adding machine in 1623, and Blaise Pascal built a more complete and workable version in 1642.

Computers are just machines that do math using electricity. Whether we are doing math with computers, mechanical calculators, pens and pencils, or our fingers to help, we are doing machine learning.

So, to get at the heart of the beginning of machine learning, we must ask ourselves, what does the machine do and how? The goal of machine learning is to take a dataset and use that dataset to make predictions about unknown values within it. Additionally, these predictions should work by rote — a human being’s creativity should not be needed to make those predictions, the system itself should make the predictions.

The aspect of mathematics that deals with predicting unknown values in a dataset is known as curve fitting.

Curve fitting has been known to humanity since at least the ancient Babylonians. Babylonian astronomers used curve fitting techniques to discover missing data points in astronomic tables. Unfortunately, the specific techniques they used have been lost to history. Today, computer-based machine learning gives us more methods to choose from and we can work with much higher numbers.

The earliest techniques in curve fitting are known today as interpolation techniques. These techniques find curves that exactly match the known points, and then use those curves to determine unknown points in the graph. The simplest interpolation technique is linear interpolation, where the unknown value is determined by simply drawing a straight line between the two nearest points. This method was used by Hipparchus of Rhodes, for instance, in the second century B.C., to construct trigonometry tables.

Ptolemy (c. 100 AD–170 AD) expanded the interpolation approach so as to use multiple variables. Essentially, given a set of boundary conditions, Ptolemy picked out the variable which influenced the outcome of a function most and determined the linear correlation of that variable. His “adaptive” linear technique is very similar to some machine learning models today.

However, linear interpolation is fairly limited. Creating an interpolating curve using polynomials allows for much more complex interpolations. Around 600 AD, Chinese astronomers started using second order polynomials for interpolation. By the 1300s it appears that they had generalized the principle, utilizing third order polynomials in their calculations. Mathematician Zhu Shıjie (approx 1300 AD) presented fourth order problems in a textbook.

The general forms of polynomial interpolation with which we are most familiar today were developed in the 1600s by Newton, Gauss, Gregory, and Lagrange. Multivariate interpolation techniques were explored in 1865 by Kronecker.

However, exact interpolation is not always what is needed. As science grew and grew, the recognition of “error” in datasets also grew. So did techniques for dealing with error. While you can model any set of x/y coordinates with a sufficiently large polynomial (assuming one y value for each x), as more and more error creeps in, the polynomial starts modeling your error more than the actual values. For many samples of very noisy data, polynomial interpolations are basically useless.

As a result, the 19th century saw the establishment of regression techniques. Regression techniques, instead of finding an exact fit, find the best fit. Some “best fit” techniques were used prior to the 1800s but they were fairly rudimentary, for example averaging together nearby observations, or Cotes’ rule for finding a weighted average.

However, in 1805, Adrien-Marie Legendre published a paper on the method of least squares for finding a regression line. In this technique, given noisy data, the goal is to find a line where you minimize the squared values of the error (the difference between the point that exists on the model line and the point that exists in the data itself). This technique was extended to higher order polynomials. Other methods for determining “best fit” were established as well.

With regression analysis, the mathematical community formalized the notion of a “model”—an underlying representation of how the algorithm “thinks” about the data. Models essentially have four components:

- a set of one or more input (i.e., independent) variables,

- a set of parameters used to tune the model,

- a relating function which combines the parameters and the input variables to produce

- one or more output (i.e., dependent) variables.

Typically in regression analysis the relating function (3) is fixed, and the goal is to find the set of parameters (2) which best facilitates the conversion of input variables to outputs.

As an example, we may be attempting to model a curve with a quadratic equation. Quadratic equations have the general form “y = Ax^2 + Bx + C”. This general equation would be the regression model, “x” would be our input variable, “y” would be our output, and “A”, “B”, and “C” would be our parameters. So, the part of our model that is unknown is the tuning parameters—“A”, “B”, and “C”. Regression analysis generally attempts to find the best set of tuning parameters to get the best conversions from input variables to output variables.

Improvements in regression techniques have led to:

- An increase in the number of criteria to find the best set of parameters for a model

- More model types to choose from

- More types of inputs and outputs (not just numbers, but labels, images, etc.)

- Techniques where the model is not chosen ahead of time, but deduced from the data

Machine learning, then, is basically the application of these techniques using a computer. Machine learning takes data and builds a model which can then be used to predict outcomes of future data.

The two main modifications of machine learning compared to typical statistical regression analysis is that (1) the quantities of data are huge, and (2) there is less reliance on the computer operator’s own understanding of statistics. So the “interface” to building and using the model is more straightforward and less reliant on the user understanding advanced statistics.

In summary, machine learning is not a new technique but is simply a modern extension of a tool that we have had in our toolbox since the days of the Babylonians. It continues to serve us well to help us extrapolate our data to estimate the value of unknown results and to help find the signal in noisy data.

Author’s note: For those who want to know more about the history of interpolation and statistical regression, see:

Meijering, E., “A Chronology of Interpolation: From Ancient Astronomy to Modern Signal and Image Processing,” Proceedings of the IEEE 90(3).

Stigler, S., The History of Statistics, Harvard University Press (1986).

Sheynin, “On the History of the Principle of Least Squares,” Archive for History of Exact Sciences 46(1).

Also by Jonathan Bartlett: 1973 computer program: The world will end in 2040 Jonathan Bartlett offers some thoughts on a frantic, bizarre – but instructive – computer-driven prediction

and

Walter Bradley Center fellow Jonathan Bartlett discovers longstanding flaw in an aspect of elementary calculus