Tell Kids the Robot Is “It,” Not “He”

Teaching children to understand AI and robotics is part of a good education todayWe are not truly likely to be ruled by AI overlords (as opposed to powerful people using AI. But even doubtful predictions may be self-fulfilling if enough impressionable people come to believe them. Children, for example.

We adults are aware of the limitations of AI. But if we talk about AI devices as if they were people, children—who often imbue even stuffed toys with complex personalities—may be easily confused.

Sue Shellenbarger, Work & Family columnist at The Wall Street Journal, warns that already, “Many children think robots are smarter than humans or imbue them with magical powers.” While she admits that the “long-term consequences” are still unclear, “an expanding body of research” suggests we need to train children to draw boundaries between “themselves and the technology.”

She notes that MIT researchers have shown that children as young as four years old can, when taught, recognize that they remain “smarter” than a computer that beats them at a game. (Perhaps the fans of AlphaGo should also take the course?)

Even small changes — such as calling computers “it” instead of “he” or “she” (or by given names such as Alexa, Siri, or Cortana—will help.

But we have so embedded computers into our lives that some sources think explicit training is also useful:

Not everyone thinks AI deserves special attention for students so young. Some argue that developing kindness, citizenship, or even a foreign language might serve students better than learning AI systems that could be outdated by the time they graduate. But Payne sees middle school as a unique time to start kids understanding the world they live in: it’s around ages 10 to 14 year that kids start to experience higher-level thoughts and deal with complex moral reasoning. And most of them have smartphones loaded with all sorts of AI.

Jenny Anderson, “MIT developed a course to teach tweens about the ethics of AI” at Quartz

The AI ethics course, developed by MIT graduate research assistant Blakeley H. Payne, teaches that all AI (and computer algorithms) contain decisions made by “stakeholders.” Those hidden decisions affect the results.

Early in the curriculum, the children play “AI Bingo,” which leads them to consider just why Instagram showed them an advertisement or why certain news articles bubbled to the top of their news app. Many of us adults could use the information too:

Instagram relies on machine learning based on your past behavior to create a unique feed for everyone. Even if you follow the exact same accounts as someone else, you’ll get a personalized feed based on how you interact with those accounts.

Josh Constine, “How Instagram’s algorithm works” at TechCrunch

At one point, Payne has the children design an algorithm “recipe” to make the “best” peanut butter sandwich. When the children then compare their algorithms they see how each of them defined “best” and the effect it had on the choices offered.

Computers, even so-called artificially intelligent ones, are just tools, tools that we have made. They are not creative. They are not intelligent. They blindly follow the algorithms—or as Payne says in her course, the “recipes” — we give them.

Shellenbarger ends her column by offering principles for raising an “AI-savvy child” that sound about right to us at MindMatters News; Here are are some, with our own thoughts added:

● Use the pronoun “it” when referring to a robot.

● Be positive about the benefits of AI in general.

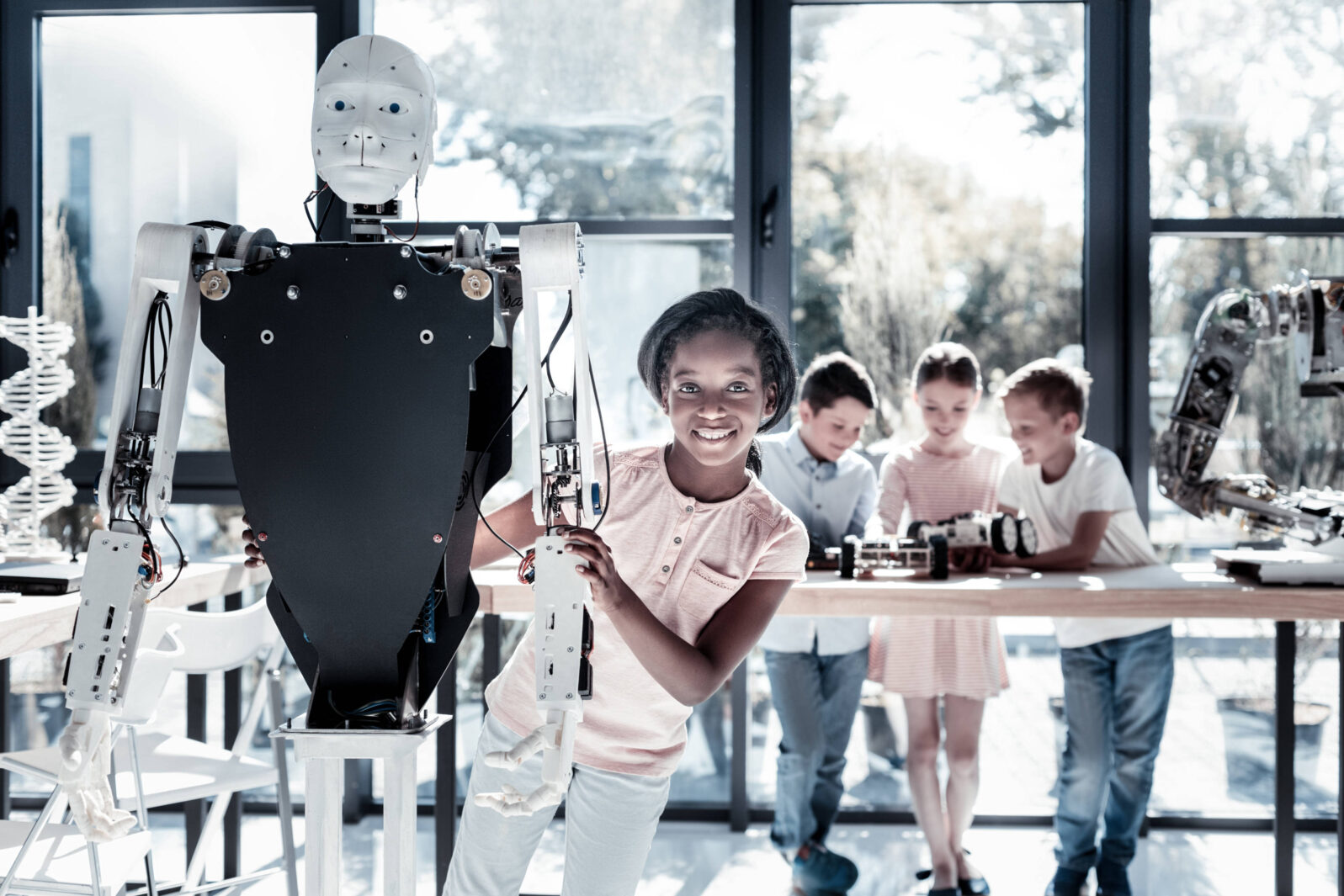

● Encourage children to explore how robots are designed and built.

● Help them understand that humans are the source of the AI devices’ intelligence.

● Invite them to consider the ethics of AI design. Should we make sure our bots show politeness and good sportsmanship?

● Encourage skeptical evaluation of the information received via smart toys and devices, just as with information received via the internet. Coming from such sources does not, by itself, make the information any more likely to be true.

● Be cautious about AI-propelled toys that are marketed as a child’s “best friend.” A teddy bear may be easier to outgrow than a sophisticated device.

Let’s also do our children a favor and challenge the AI-is-superior-to-humans chatter when we hear it. It’s not true and it’s hurtful. And, if we’re not careful, it may be self-fulfilling in some children’s lives.

If you enjoyed this item, you may also be interested in these articles by Brendan Dixon:

Lazy engineers treat AI as magic. When software engineers mostly use shared code, they save time but risk losing understanding.

and

Fake news thrives on fears of a robot takeover The motion graphics artist tried to explain that he had faked the amazing robot video but…