Built to Save Us from Evil AI, OpenAI Now Dupes Us

When combined with several metric tons of data, its new GPT-3 sometimes it looks like it is “thinking.” No, not reallyOpenAI started life in 2015 as a non-profit organization whose mission was to safeguard humanity from malevolent artificial intelligence (AI). The founders’ goal was to ensure that when superhuman AI arrived, its inborn purpose was to serve humanity rather than subjugate it. In 2019, OpenAI transitioned to a for-profit company based in San Francisco and secured a one billion dollar investment from Microsoft. Things seem to have moved on from there.

There’s a good question whether superhuman AI is even possible, as we have pointed out repeatedly here at Mind Matters News. While some of the AI tasks seem impressive, oftentimes when you look under the hood, what you find is a very expensive party trick or a staged demo. Usually, there is something real and worthwhile, but it never lives up to the hype.

In fact, as we’ve also pointed out, the real danger is not that AI will gain superhuman powers but rather that we will be convinced that it has, and we will cede to it important reasoning tasks that it is not capable of performing. As the sophistication of AI parlor tricks increases, that possibility increases along with it.

The latest release of OpenAI’s GPT-3 has convinced some people that the AI revolution has arrived. GPT-3 is indeed quite an engineering feat. It employs a pretty clever trick that allows for a lot of very slick and interesting surface-level natural language processing.

The problem is that, at its core, it is just a text-prediction engine, and it doesn’t go much beyond that. What it adds to the mix is the ability to parameterize text-prediction in interesting ways. When combined with several metric tons of data, sometimes it looks like it is “thinking.”

For instance, several people have built code-generators based on GPT-3, where natural language inputs build user interfaces. Others have created entire mostly human-looking articles based on just a sample of text. It can also semi-solve a few basic arithmetic problems when they are posed in natural language.

The problem, as is the case with all AIs, is that it never is actually thinking. The danger is that the demo cases presented in the media make it look like it is.

Kevin Lacker gave GPT-3 it a whirl beyond just the demo cases. He found that if you give it the natural language processor instances of basic arithmetic questions, it will get the answer correct. That is, until you get past three digits. After that, the answers start being wrong.

If you ask it, it will correctly answer that a giraffe has two eyes and a spider has eight eyes. However, it also thinks that your foot has two eyes and a blade of grass has one.

You can see that instant publicity for the impressive successes can lead some to think that the highly-trained text processor has actually developed a model of the world, and that it is reasoning about it. The problem is that misunderstanding its capabilities will lead people to rely on it for things that it can’t really do.

Suppose someone took its demonstrated abilities to do basic arithmetic to three digits as indicating that it can actually do math (I mean, it’s a computer, we kind of expect it to do math already). Then imagine the problems they would encounter if they actually relied on it to do math in connection with providing some kind of product or service… They’re pretty safe until the fourth digit but do they know that?

For basic natural language query processing, GPT-3 is a huge step forward. For public understanding of what AI is and does (and what we should rely on it for), its flashy demos have created misperceptions. That can have dangerous consequences.

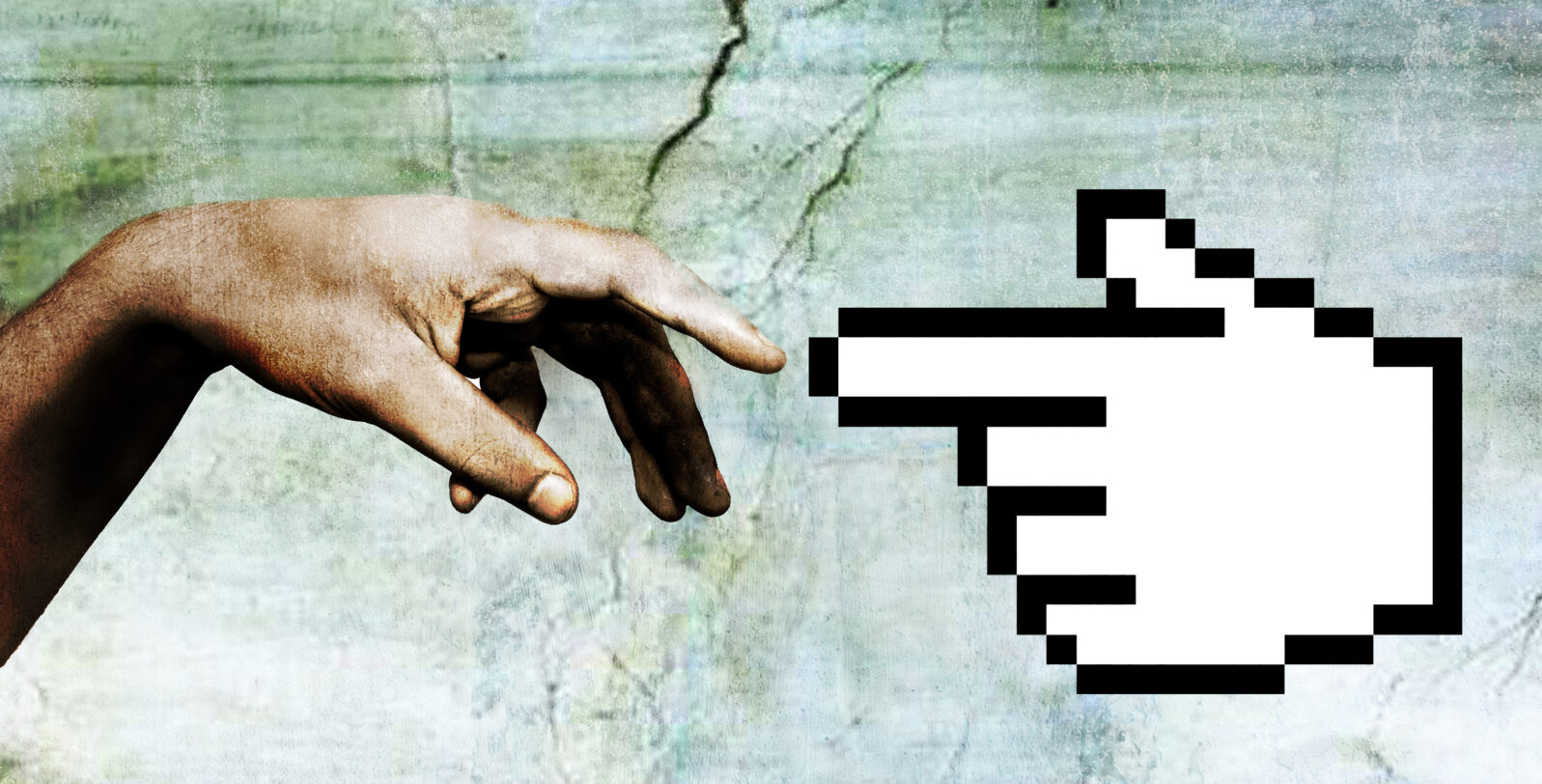

AI is the modern idol. Like our predecessors, we forget that the idols are made of ordinary things. And we get crushed when we imagine that they are our gods.

You may also enjoy these posts by Jonathan Bartlett:

Twenty years on, aliens still cause global warming. Over the years, the Jurassic Park creator observed, science has drifted from its foundation as an objective search for truth toward political power games.

and

Was the COVID-19 virus designed? The computer doesn’t know. Some researchers confuse not finding a particular type of design with ruling out design.

Also: Thinking machines? The Lovelace Test raises the stakes. The Turing test has had a free ride in science media for far too long, says an AI expert. For example, quantum physicist Scott Aaronson decided to test out the chatbot “Eugene Goostman,” with revealing results. (News)