Are Computers That Win at Chess Smarter Than Geniuses?

No, and we need to look at why they can win at chess without showing even basic common sense

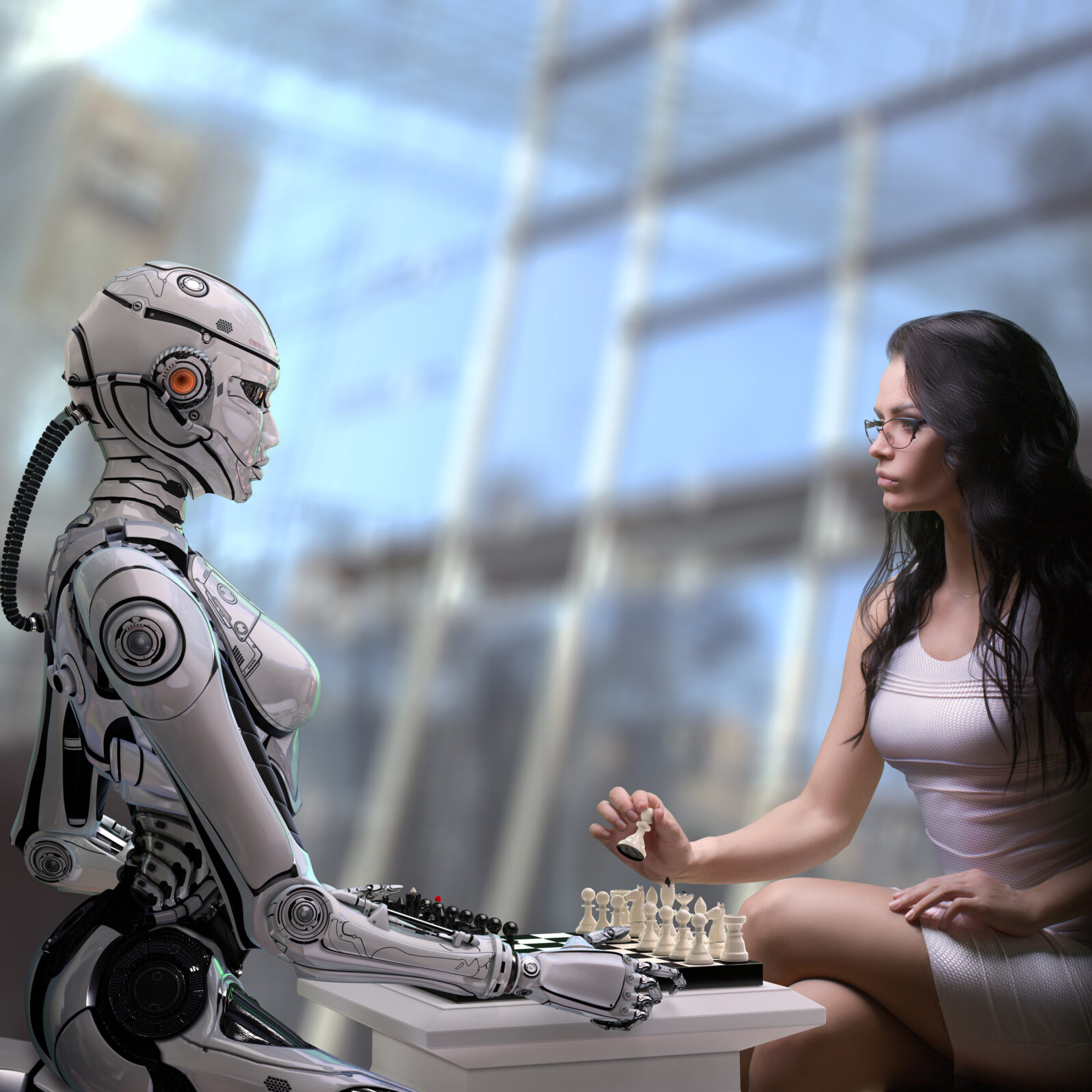

Big computers conquered chess quite easily. But then there was the Chinese game of go (pictured), estimated to be 4000 years old, which offers more “degrees of freedom” (possible moves, strategy, and rules) than chess (2×10170). As futurist George Gilder tells us, in Gaming AI, it was a rite of passage for aspiring intellects in Asia: “Go began as a rigorous rite of passage for Chinese gentlemen and diplomats, testing their intellectual skills and strategic prowess. Later, crossing the Sea of Japan, Go enthralled the Shogunate, which brought it into the Japanese Imperial Court and made it a national cult.” (p. 9)

Then AlphaGo, from Google’s DeepMind, appeared on the scene in 2016:

As the Chinese American titan Kai-Fu Lee explains in his bestseller AI Super-powers,8 the riveting encounter between man and machine across the Go board had a powerful effect on Asian youth. Though mostly unnoticed in the United States, AlphaGo’s 2016 defeat of Lee Sedol was avidly watched by 280 million Chinese, and Sedol’s loss was a shattering experience. The Chinese saw DeepMind as an alien system defeating an Asian man in the epitome of an Asian game.

George Gilder at Gaming AI (p. 13)

Thirty-three-year-old Korean Lee Se-dol later announced his retirement from the game. Meanwhile, Gilder tells us, that defeat, plus a later one, sparked a huge surge in Chinese investment in AI in response: “Less than two months after Ke Jie’s defeat, the Chinese government launched an ambitious plan to lead the world in artificial intelligence by 2030. Within a year, Chinese venture capitalists had already surpassed US venture capitalists in AI funding.”

AI went on to conquer poker, Starcraft II, and virtual aerial dogfights.

The machines won because improvements in machine learning techniques such as reinforcement learning enable much more effective data crunching. In fact, soon after the defeats of human go champions, a more sophisticated machine was beating a less sophisticated machine at go. As Gilder tells it, in 2017, Google’s DeepMind launched AlphaGo Zero. Using a “generic adversarial program,” AlphaGo Zero played itself billions of times and then went on to defeat AlphaGo 100–0 (p. 11). This incident went largely unremarked because it was a mere conflict between machines.

But what has really happened with computers, humans, and games is not what we are sometimes urged to think, that machines are rapidly developing human-like capacities. In all of these games, one feature stands out: The map is the territory.

Think of a simple game like checkers. There are 64 squares and each of two players is given 12 pieces. Each player tries to eliminate the other player’s pieces from the board, following the rules. Essentially, in checkers, there is nothing beyond the pieces, the board, and the official rules. Like go, it’s a map and a territory all in one.

Games like chess, go, and poker are vastly more complex than checkers in their degrees of freedom. But they all resemble checkers in one important way: In all cases, the map is the territory. And that limits the resemblance to reality. As Gilder puts it, “Go is deterministic and ergodic; any specific arrangement of stones will always produce the same results, according to the rules of the game. The stones are at once symbols and objects; they are always mutually congruent.” (pp 50–51)

In other words, the structure of a game rules out, by definition, the very types of events that occur constantly in the real world where, as many of us have found reason to complain, the map is not the territory.

Or, as Gilder goes on to say in Gaming AI,

Plausible on the Go board and other game arenas, these principles are absurd in real world situations. Symbols and objects are only roughly correlated. Diverging constantly are maps and territories, population statistics and crowds of people, climate data and the actual weather, the word and the thing, the idea and the act. Differences and errors add up as readily and relentlessly on gigahertz computers as lily pads on the famous exponential pond.

George Gilder in Gaming AI (p. 51)

Generally, AI succeeds wherever the skill required to win is calculation and the territory is only a map. For example, take IBM Watson’s win at Jeopardy in 2011. As Larry L. Linenschmidt of Hill Country Institute has pointed out, “Watson had, it would seem, a built-in advantage then by having infinite—maybe not infinite but virtually infinite—information available to it to do those matches.”

Indeed. But Watson was a flop later in clinical medicine. That’s probably because computers only calculate and not everything in the practice of medicine in a real-world setting is a matter of calculation.

Not every human intellectual effort involves calculation. That’s why increases in computing power cannot solve all our problems. Computers are not creative and they do not tolerate ambiguity well. Yet success in the real world consists largely in mastering these non-computable areas.

Science fiction has dreamed that ramped-up calculation will turn computers into machines that can think like humans. But even the steepest, most impressive calculations do not suddenly become creativity, for the same reasons as maps do not suddenly become the real-world territory. To think otherwise is to believe in magic.

Note: George Gilder’s book, Gaming AI, is free for download here.

You may also enjoy: Six limitations of artificial intelligence as we know it. You’d better hope it doesn’t run your life, as Robert J. Marks explains to Larry Linenschmidt.