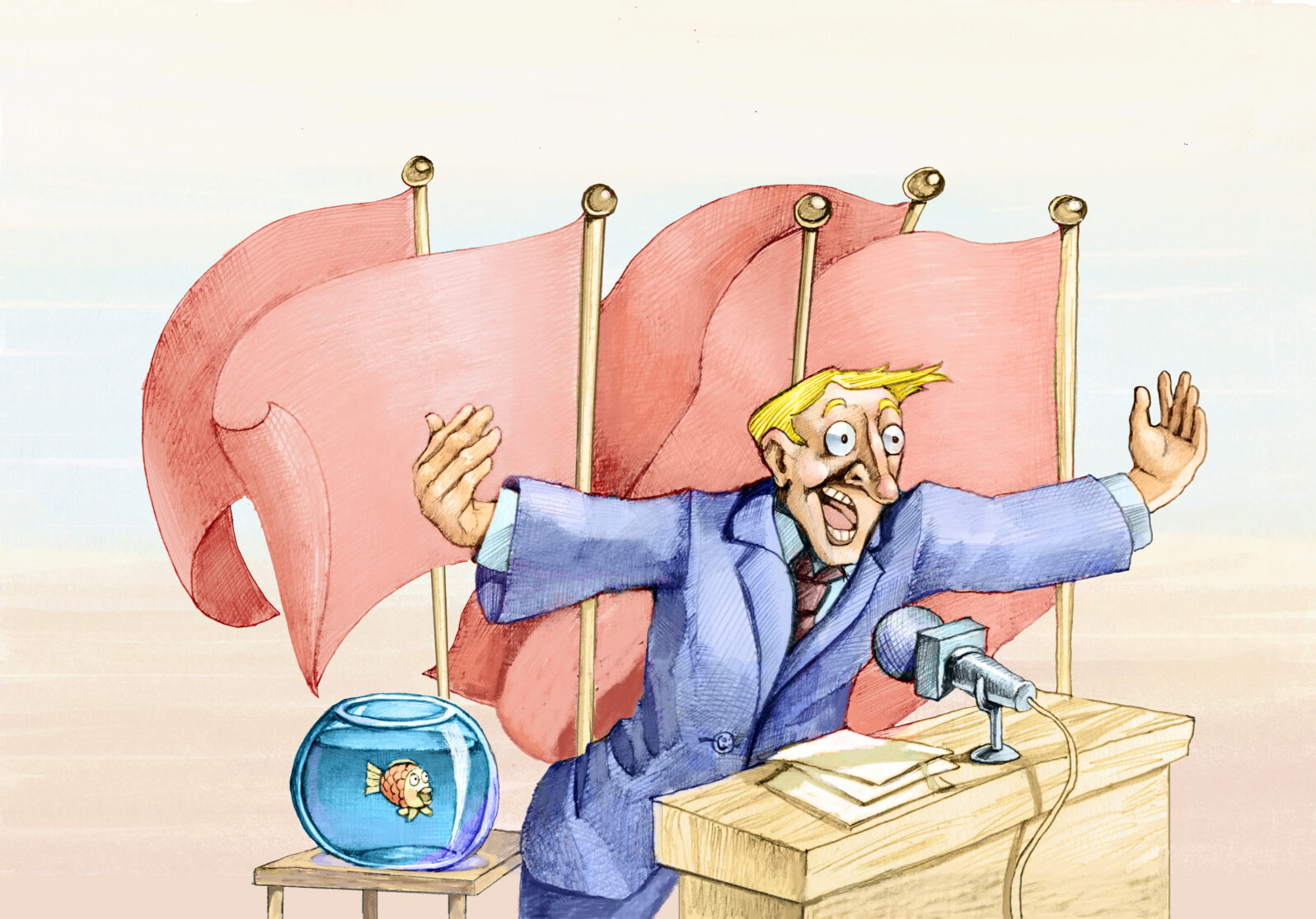

#12! AI Is Going To Solve All Our Problems Soon!

In our countdown for the Top Twelve AI Hypes of 2020First, before we get started: The AI industry has been making real progress. But with real progress comes real hype. That figures. We spent all year covering the real progress. Now that we are all kicking up our feet, we are going to send up some of the hype. We used to only have 10 top hypes but now we have 12. If progress continues at this pace, we might end up with 59 by 2050… Anyway, our Walter Bradley Center director Robert J. Marks interviewed fellow computer nerds, members of our Brain Trust, Jonathan Bartlett and Eric Holloway on their picks last Saturday. And here’s #12!

The article itself is actually an admission rather than a hype but let’s look at it:

Starts at 2:00 min. A partial transcript and Show Notes follow.

It’s no secret that machine-learning models tuned and tweaked to near-perfect performance in the lab often fail in real settings. This is typically put down to a mismatch between the data the AI was trained and tested on and the data it encounters in the world, a problem known as data shift. For example, an AI trained to spot signs of disease in high-quality medical images will struggle with blurry or cropped images captured by a cheap camera in a busy clinic.

Will Douglas Heaven, “The way we train AI is fundamentally flawed” at Technology Review

Eric Holloway: Yeah, this is actually a really insightful article and it’s true. The problem they identify cross cuts every major machine learning technique out there, because what we call AI is actually more properly called machine learning and essentially it’s just a curve fitting. You have a bunch of data points and you find the best curve that fits those data points, although it’s a little bit more complex than just a curve, but it’s the same idea. But if you keep that idea in your head, it’s also easy to see why there’s a problem.

Let’s start with a really simple example. Let’s say you have a 2D graph and you have a single data point on that graph.

Okay. Now, I ask you to fit the best line you can, to that single data point. There are so many different lines that can fit that one data point, and they’re all very different lines from each other. And that in a nutshell is the problem with modern AI. Even though we have millions or billions or even trillions of data points, the models themselves that are being trained on these data points are still like this line being trained on a single dot.

The models themselves are so, so, so complex that even with billions or trillions of data points, the models are still very under specified. And like with the dot example, you can have both a line sloping up and line sloping down, which both perfectly fit that dot, but on other data have very different predictions. And so that’s the problem with modern AI. Even these fancy techniques like deep learning, the deep learning model, you’ll have many different models that fit the same vast data sets.

Jonathan Bartlett: The other thing is that anytime you have a model, there are things that match the model and things that don’t. There are things that are inside what you can expect and things that are not. And one of the problems with a lot of the AI work is that there isn’t really a clear definition always of why the data is being chosen and why those specific fields. Sometimes it’s just what could be measured. And also, there’s not a good clarity about what’s in bounds and out of bounds…

And that’s what I see happening a lot with AI is that something maybe within the bounds on the things that people think to train for, but then that’s not necessarily how they’re going to be used in the real world. And once you make that switch, then the models aren’t valid anymore.

(However we train AI, it doesn’t sound as though AI is going to solve all our problems any time soon.)

You may also enjoy a walk through Memory Lane:

The Top Ten AI hypes of 2018 How about this one: “With AI mind reading, you will NEVER have secrets again!” Well, it’s 2020 and you can still have secrets even now if you mind the Chinese proverb: “A secret is information known by one person.”

and, don’t go,

The Top Ten AI Hypes for 2019: Elon Musk’s robotaxis are driving everyone around everywhere, making money for their owners. That check is still in the 2019 Christmas mail.

Show Notes

- 01:13 | Introducing Jonathan Bartlett

- 01:39 | Introducing Dr. Eric Holloway

- 02:00 | #12: “The way we train AI is fundamentally flawed” (MIT Technology Review)

- 09:08 | #11: “Transparency and reproducibility in artificial intelligence” (Nature)

- 12:44 | #10: “Will Artificial Intelligence Ever Live Up to Its Hype?” (Scientific American)

- 16:58 | #9: “What to make of Erica, the AI Superstar Robot?” (Mind Matters News) and “A.I. Robot Cast in Lead Role of $70M Sci-Fi Film” (The Hollywood Reporter)

Additional Resources

- Jonathan Bartlett at Discovery.org

- Eric Holloway at Discovery.org

- #12: “The way we train AI is fundamentally flawed” (MIT Technology Review)

- #11: “Transparency and reproducibility in artificial intelligence” (Nature)

- Get George Gilder’s new book Gaming AI for FREE!

- Gaming AI: Why AI Can’t Think but Can Transform Jobs by George Gilder at Amazon

- #10: “Will Artificial Intelligence Ever Live Up to Its Hype?” (Scientific American)

- The Myth of Artificial Intelligence: Why Computers Can’t Think the Way We Do by Erik Larson at Harvard University Press

- #9: “What to make of Erica, the AI Superstar Robot?” (Mind Matters News) and “A.I. Robot Cast in Lead Role of $70M Sci-Fi Film” (The Hollywood Reporter)