Publish or Perish — Another Example of Goodhart’s Law

In becoming a target, publication has ceased to be a good measureThe linchpin of scientific advances is that scientists publish their findings so that others can learn from them and expand on their insights. This is why some books are rightly considered among the most influential mathematical and scientific books of all time:

Elements, Euclid, c. 300 B.C.

Physics, Aristotle, c. 330 B.C.

On the Revolutions of Heavenly Spheres, Nicolaus Copernicus, 1543

Dialogue Concerning the Two Chief World Systems, Galileo Galilei, 1632

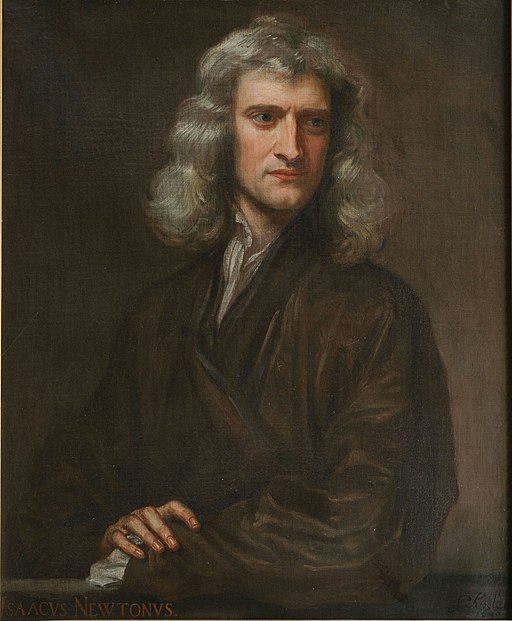

Mathematical Principles of Natural Philosophy, Isaac Newton, 1687

The Origin of Species, Charles Darwin, 1859

As Newton said, “If I have seen further it is by standing on the shoulders of Giants.”

It seems logical to gauge the importance of modern-day researchers by how much they have published and how often their research has been cited by others. Thus, the cruel slogan “publish-or-perish” has become a brutal fact of life. Promotions, funding, and fame all hinge on evidence through publication that a researcher is worthy of being promoted, supported, and celebrated.

Every job interview, every promotion case, every grant application includes a publication list. Virtually every researcher has a web site with a curriculum vitae (CV) link. The Internet has now made it feasible to tabulate citations too.

For example, in 2004 Google launched Google Scholar, a database consisting of hundreds of millions of academic papers that have been found by its web crawlers. Then Google created Scholar Citations, which lists a researcher’s papers and the number of citations found by the Googlebots. Soon, Google began compiling productivity indexes based on the number of papers an author has written and the number of times these papers have been cited. For example, the h-index (named after its creator Jorge E. Hirsch) is equal to the maximum number of articles h that have been cited at least h times. My h-index is currently 24, meaning that I have written 24 papers that have each been cited by at least 24 other papers.

Google Citations is a fast-and-easy (okay, lazy) way of assessing productivity and importance. As Google says, its citation indexes are intended to measure “visibility and influence.” Citation indexes are now considered so important that some researchers include them on their CVs and webpages.

Unfortunately, the publish-or-perish culture has encouraged researchers to game the system, which undermines the usefulness of publication and citation counts. This is an example of Goodhart’s law: “When a measure becomes a target, it ceases to be a good measure.” The U.S. and British monetary authorities used to believe that there was a close statistical relationship between the rate of growth of the money supply and the rate of inflation and that they could consequently set a target growth rate for the money supply that would achieve a desirable rate of inflation.

The problem is that the definition of the money supply is ambiguous and the relationship between money and inflation is tenuous. Is the money supply just the amount of cash floating around, or does it include checking account balances? What about funds in savings accounts and money market funds that can be easily spent? How about cash in brokerage accounts? What about the fact that most purchases are made with credit cards?

Governments tried several measures of the money supply (M0, M1, M2, M3, MZM) but, as per Goodhart’s law, they all failed. Every measure used as a target ceased to be a good measure. Governments had to abandon money supply targets and, instead, now focus directly on inflation targets (currently 2 percent in England and the United States).

In the context of publish-or-perish, the widespread adoption of publication counts and citation indexes to gauge visibility and influence has caused publication counts and citation indexes to cease to be a good measure of visibility and influence. The first sign of this breakdown was an explosive growth in the number of journals in response to researchers’ insatiable demand for publication numbers. In 2018 it was estimated that more than 3 million articles were published in more than 42,000 peer-reviewed academic journals. It has been estimated that half of all peer-reviewed articles are not read by anyone other than the author, journal editor, and journal reviewers, though I cannot think of any feasible way of identifying articles that no one has read. It is nonetheless surely true that many articles are read by very few, but the publish-or-perish incentive structure makes it better to publish something no one reads than to not publish at all.

It also encourages a variety of unseemly tricks to pass peer-review and accumulate citations.

Next: Peer-review is well-intentioned, but flawed and vulnerable to gaming.